Tại Sao AI Memory Quan Trọng Hơn Việc Chọn Model Cho Marketing Teams

Đa số teams vẫn hỏi nên dùng model nào. Theo kinh nghiệm của mình, đó không còn là câu hỏi chính nữa. Nếu hệ thống AI quên mất client hay brand, quên category, quên "tốt" trông như thế nào, thì model thông minh nhất thế giới vẫn bắt đầu mọi cuộc trò chuyện từ số không.

Gần đây, mỗi khi mình nói chuyện với marketing teams về AI, câu hỏi cứ lặp đi lặp lại là:

"Nên dùng model nào?"

Claude? GPT? Gemini? Hay model open-source nào đó fine-tune trên data riêng?

Mình hiểu tại sao mọi người hỏi. Nghe như câu hỏi chiến lược. Nghe như phần quan trọng nhất.

Nhưng mình không nghĩ vậy nữa. Không còn nữa.

Theo kinh nghiệm của mình, sự khác biệt lớn hơn thường không phải ở model. Mà ở memory.

Nếu hệ thống AI quên sạch mọi thứ quan trọng ngay khi chat kết thúc, thì mỗi task mới lại bắt đầu bằng cái nghi thức tốn kém y hệt:

- giải thích lại client hoặc business

- giải thích lại audience

- giải thích lại tone

- giải thích lại những gì đã thử rồi

- giải thích lại "tốt" trông như thế nào

Đến lúc đó, bạn không thực sự có một hệ thống. Bạn có một người mất trí nhớ rất ấn tượng.

Và mình nói vậy với tình cảm, vì mình đã tự tay build mấy con như vậy rồi :P

Model Thì Thông Minh. Hệ Thống Vẫn Hay Quên.

Đây là điều ngày càng rõ với mình trong năm qua.

Tầng model cứ cải tiến với tốc độ điên rồ. Reasoning tốt hơn. Multimodal tốt hơn. Coding tốt hơn. Tool use tốt hơn. Latency giảm. Chi phí thay đổi. Cứ vài tuần lại có benchmark mới, thông báo mới, và thêm một lý do để cảm thấy mình đang tụt lại.

Nhưng nếu mình bỏ hết mấy thứ đó qua một bên và nhìn vào cái gì thực sự thay đổi kết quả cho một marketing team, câu hỏi thường đơn giản hơn nhiều:

Liệu AI có nhớ đủ context để đưa ra quyết định tốt mà không cần brief lại từ đầu mỗi lần không?

Context đó hiếm khi hào nhoáng. Không phải "proprietary data" trừu tượng. Thường là những thứ như:

- messaging nào client hoặc leadership team đã duyệt rồi

- offer nào underperform quý trước

- segment nào quá hẹp để scale

- claim nào legal sẽ không bao giờ cho phép

- stakeholder nào cần cảm thấy được tham gia

- reporting view nào mà client, CMO, hay CFO thực sự tin tưởng

- định nghĩa thành công nào quan trọng trong tổ chức này

Không có memory đó, model vẫn tạo ra thứ gì đó bóng bẩy. Đôi khi rất bóng bẩy.

Nhưng bóng bẩy không giống hữu ích.

Mình Muốn Nói Gì Khi Nói "Memory"

Mình không chỉ nói về chat history.

Mình nói về một lớp context có cấu trúc, được giữ lại và tích lũy theo thời gian.

Theo góc nhìn của mình, có ít nhất ba loại memory quan trọng cho marketing teams.

1. Client memory

Với agency, đây là living context xung quanh client. Với team in-house, đây là living context xung quanh brand, business unit, hoặc các ưu tiên của leadership đang định hình công việc.

- brand voice

- thực tế category

- positioning đã được duyệt

- campaigns trước đó

- sở thích của stakeholder

- constraints đã biết

Cùng kiến trúc memory, khác payoff.

Nếu bạn ở agency, memory này tích lũy thành strategic output tốt hơn và switching cost mạnh hơn theo thời gian. Nếu bạn ở in-house, nó trở thành organizational memory và institutional resilience. Khi strategist hay analyst giỏi nhất nghỉ việc, knowledge có đi theo họ không?

Đây là thứ mà một strategist mới thường học từ từ qua meetings, feedback, sai lầm, và lặp lại. Vấn đề không phải bạn gọi nó là client memory hay organizational memory. Vấn đề là nếu không cấu trúc nó một cách có chủ đích, context cứ mắc kẹt trong con người thay vì trong hệ thống.

2. Operational memory

Đây là lớp "cách chúng ta làm việc."

- checklists

- rules theo từng channel

- tiêu chí QA

- hệ thống đặt tên campaign

- logic báo cáo

- escalation paths

Khi teams không capture được những thứ này, họ cứ khám phá lại cùng những sự thật vận hành. Thường dưới áp lực deadline. Thường với format hơi khác mỗi lần.

3. Evaluation memory

Cái này mình thấy thú vị nhất.

Không chỉ memory về facts. Mà memory về judgment.

Team đã reject gì, và tại sao? Client, CMO, hay leadership team nói gì là "chưa đúng lắm"? Patterns nào xuất hiện xuyên suốt các công việc thắng? Thế nào là brief hữu ích, plan mạnh, report đáng tin, setup sẵn sàng launch?

Đó là lớp biến AI từ máy tạo output thành đòn bẩy thực sự. (Đây cũng là một trong những ý tưởng cốt lõi trong khóa AI-Native Media Operations của mình — operating model chỉ hoạt động khi judgment được cấu trúc vào hệ thống, không phải để ngẫu nhiên.)

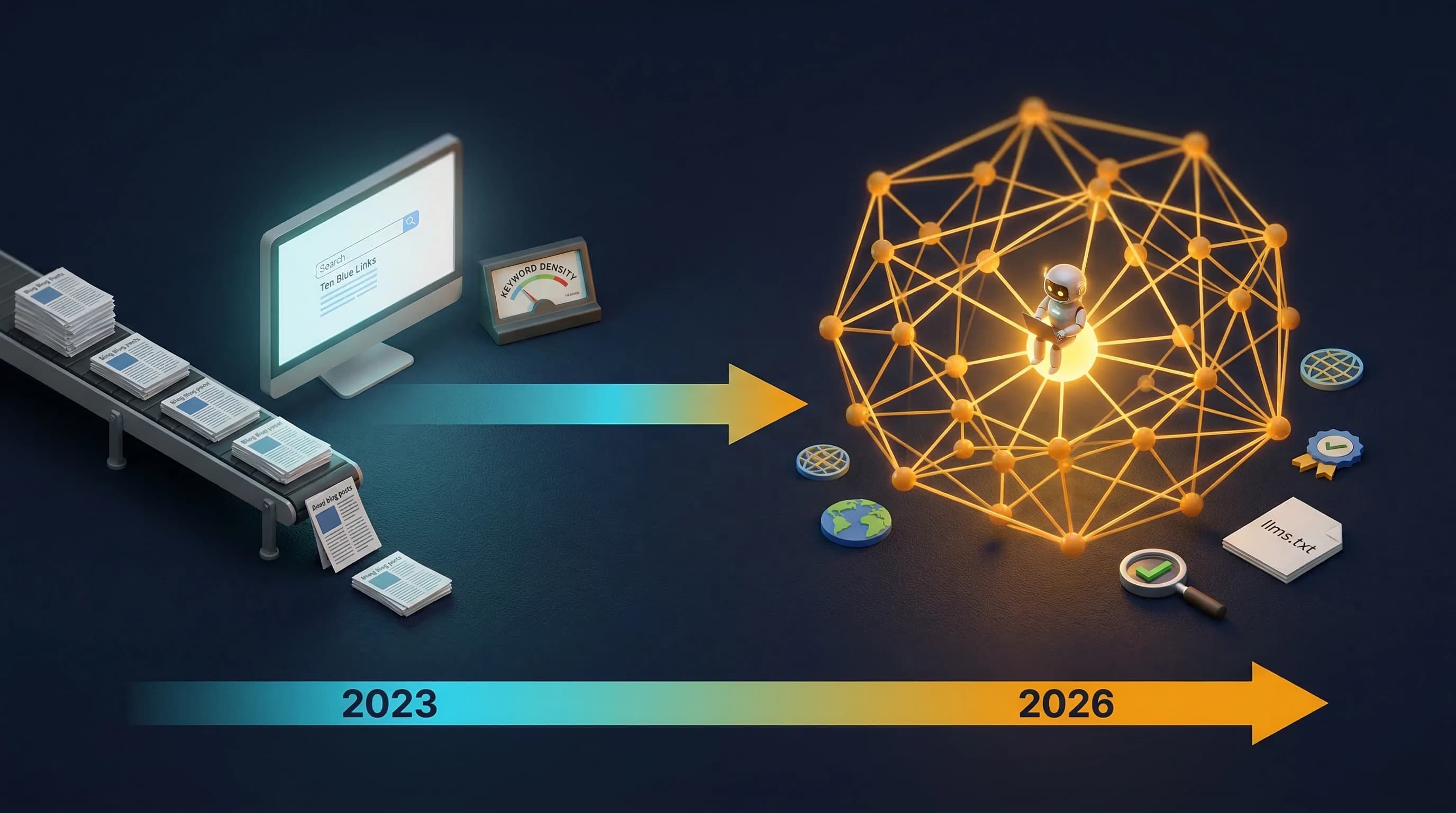

Tại Sao Memory Tích Lũy Nhanh Hơn Models

Models cải tiến qua vendor roadmaps.

Memory cải tiến qua chính công việc của bạn.

Đó là hai đường cong tích lũy rất khác nhau.

Nếu Anthropic hay OpenAI ship một model tốt hơn, bạn hưởng lợi. Tất nhiên. Và mình không phủ nhận điều đó. Reasoning tốt hơn chắc chắn quan trọng.

Nhưng đối thủ của bạn cũng hưởng lợi.

Đó là phần mình nghĩ mọi người đang đánh giá thấp.

Một cải tiến model thường được phân phối rộng. Một lớp memory thì không. Mình đã viết về một ý tưởng liên quan trong AI Nâng Sàn Lên Cho Tất Cả — khi ai cũng có cùng AI, chiều sâu mới là yếu tố khác biệt. Memory là một dạng của chiều sâu đó.

Shared context về client hay tổ chức, tiêu chí đánh giá, bài học tích lũy, chuẩn vận hành, ngôn ngữ nội bộ về "tốt" nghĩa là gì. Những thứ đó được xây bên trong tổ chức. Chúng sắc bén hơn khi sử dụng. Và chúng khó copy hơn nhiều so với "chúng tôi dùng model mới nhất."

Nói cách khác:

- model là lợi thế đi thuê

- memory là lợi thế tích lũy

Có thể mình đang nói hơi quá, nhưng mình không nghĩ quá nhiều.

Ví Dụ Marketing Mà Mình Cứ Quay Lại

Thử tưởng tượng bạn nhờ AI đưa ra campaign recommendation cho một client hoặc cho brand team của mình.

Một model mạnh hoàn toàn có thể generate ra câu trả lời hợp lý. Trong nhiều trường hợp, tốt đến mức bất ngờ.

Nhưng nếu nó không biết:

- CEO ghét brand language nghe quá playful

- sales team không tin MQL volume trừ khi opportunity quality được thể hiện rõ

- hai thử nghiệm YouTube gần nhất underdeliver vì mismatch landing page mới là vấn đề thực sự

- các thị trường regional cần proof points khác nhau

- finance đã cap paid social growth cho quý rồi

Lúc đó câu trả lời có thể vẫn trông chiến lược.

Thậm chí có thể nghe chiến lược hơn sự thật.

Nhưng theo kinh nghiệm của mình, chính xác đó là lúc teams gặp rắc rối với AI. Họ nhầm lẫn fluency với situated intelligence.

Model nghe như nó hiểu business. Thực ra nó hiểu hình dáng của một câu trả lời tốt.

Đó không phải cùng một thứ.

Rủi Ro, Tất Nhiên, Là Memory Tệ

Mình phải công bằng ở đây.

Memory không tự động tốt. Memory tệ scale lên những giả định tệ. Memory cũ làm cứng tư duy lỗi thời. Memory không có cấu trúc trở thành ngăn kéo đồ bỏ. Và nếu bạn dump mọi thứ vào "context," hệ thống ồn hơn chứ không thông minh hơn.

Nên mình không ủng hộ memory vô hạn.

Mình ủng hộ memory có chọn lọc.

Memory hữu ích.

Loại giúp team trả lời:

- AI nên biết gì mặc định?

- Gì nên giữ riêng cho từng task?

- Gì cần được validate trước khi tái sử dụng?

- Gì nên retire vì không còn phản ánh thực tế?

Nói cách khác, memory cần stewardship. Giống như content vậy. Giống như strategy vậy.

Mình Nghĩ Teams Nên Xây Gì Trước

Nếu mình đang giúp một marketing team nghiêm túc về chuyện này, mình sẽ bắt đầu bằng một bài tập rất không hào nhoáng.

Không phải prompt libraries. Không phải model bake-off. Không phải "AI strategy deck của chúng ta."

Mình sẽ bắt đầu bằng cách xác định:

- Context nào được tái sử dụng nhiều nhất?

- Lỗi nào cứ lặp lại vì hệ thống quên?

- Tiêu chí nào định nghĩa output chấp nhận được?

- Client knowledge hay brand knowledge nào không bao giờ nên phải gõ lại?

Ngay lập tức bạn biết memory layer nên lưu gì.

Và khi memory đó tồn tại, quyết định về model trở nên có giá trị hơn vì chúng đang vận hành trên nền tảng tốt hơn nhiều.

Đây là một trong những lý do mình ngày càng quan tâm đến shared memory architectures hơn là tranh luận về model. Models quan trọng. Nhưng hệ thống không có memory tạo ra rất nhiều năng suất giả.

Mọi thứ trông nhanh. Không gì thực sự tích lũy.

Mình Đang Ở Đâu

Mình vẫn quan tâm đến models. Mình test chúng liên tục. Mình dùng nhiều hơn một. Mình thích so sánh. Chúng thực sự hữu ích.

Nhưng nếu bạn hỏi mình lợi thế bền vững của một marketing team đến từ đâu bây giờ, mình sẽ không bắt đầu với model.

Mình sẽ bắt đầu với câu hỏi này:

Hệ thống AI của bạn nhớ gì sau khi buổi demo hay ho kết thúc?

Nếu câu trả lời là "không nhiều," thì mình nghĩ đó mới là bottleneck thực sự.

Đó là một phần tư duy đằng sau cách mình build STRATUM. Không phải "thêm một chatbot nữa," mà là hệ thống nơi context tích lũy thay vì biến mất. Mình có thể viết thêm về chuyện này riêng vì đúng là có góc sản phẩm ở đây, nhưng mình nghĩ operating model lớn hơn bất kỳ sản phẩm đơn lẻ nào.

Vậy thôi từ mình.

Mình thực sự muốn nghe các teams khác đang nghĩ gì về chuyện này. Bạn đang dành nhiều thời gian hơn cho việc chọn model hay xây memory? Và bạn đã tìm được cách giữ shared context hữu ích mà không biến nó thành đống lộn xộn chưa?

Cheers, Chandler