對「Bing AI唔可以信」嘅反應

我fact-check咗fact-checker關於Bing AI捏造financial data嘅claim——結果made-up numbers嘅問題係真實嘅,而且比我hoped嘅更差。

呢篇文章寫於2023年,部分內容可能已經有變化。

我今日睇到呢篇文章 Bing AI can't be trusted,自然咁引起我嘅interest。呢篇文章做咗好多fact check去show新Bing chat包含大量made-up嘅factual information。篇文比較短,去讀吓啦。

以下係我嘅幾個quick reaction:

既surprise又唔surprise

我generally知道large language model (LLM) 嘅limitations,chatGPT就係其中一個。三個main limitations係:

- 佢唔index text以外嘅web data(好似video、audio、images等⋯)

- chatGPT嘅data set好舊(2021年)

- 呢啲models會make up words因為佢哋唔知邊個information source比其他嘅更authoritative/trustworthy。

所以我hope Bing & OpenAI integration可以solve以上所有limitations。Well,根據Dmitri篇文章,Bing仲未solve到。差得遠。

再fact-check篇文章

如果Dmitri提到嘅嘢都唔係factually correct就唔好喇。所以我自己做咗幾個fact-check。我由Gap financial statements開始因為seem最straightforward。我include咗sources同screenshots喺下面,等你唔使自己repeat呢個exercise:

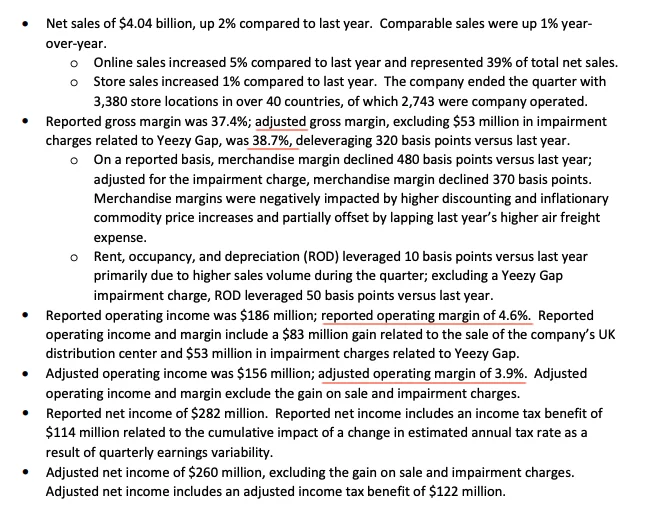

- 呢個係Gap Q3 2022 earning release。

- 我由Gap statement截咗以下screenshot同highlight咗key numbers。Dmitri冇錯,Bing chat make up咗啲numbers好似adjusted gross margin、operating margin等⋯

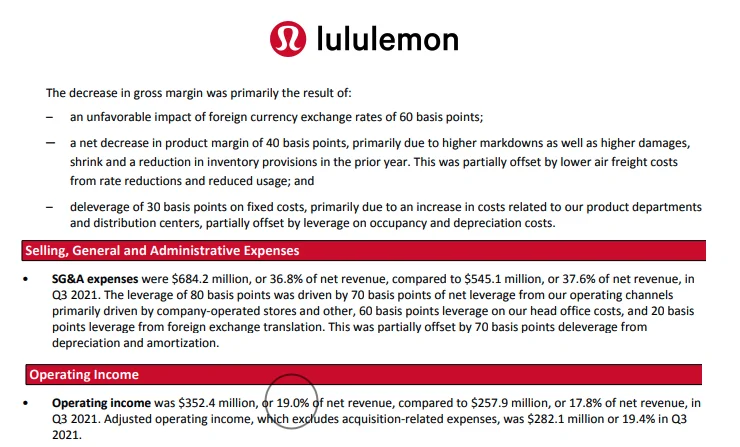

Lululemon嘅numbers呢?

- 呢個係Lululemon嘅Q3 2022 financial report。同樣,我highlight咗Dmitri篇文章提到嘅key numbers。佢冇錯,Bing search make up咗numbers。

至於Mexico City itinerary,我唔係呢方面嘅expert,所以冇辦法careful咁fact-check。例如,我search "Primer Nivel Night Club - Antro"嘅時候搵到呢個Facebook page。但我冇辦法100%確認Bing Search嘅建議係valid定唔係。

我哋可以去邊

好明顯,喺呢個時間點,Bing & OpenAI integration仲未能夠fix large language models (LLMs) 隨便make up嘢嘅問題。

我唔夠technical去understand呢個issue有幾難solve。如果連factual data都可以咁inaccurate,我哋需要對更subjective嘅topics更加careful,好似最好嘅餐廳/plumber/local services、personal finance、health、relationship等。

公平啲講,Bing同OpenAI喺presentation嘅時候有講過佢哋understand新technology會有好多嘢get wrong,所以佢哋design咗"thumb up/thumb down" interface等users可以easily俾feedback。Hopefully,有更多user feedback,部machine會get better。

一個algorithm去fact-check LLM output?

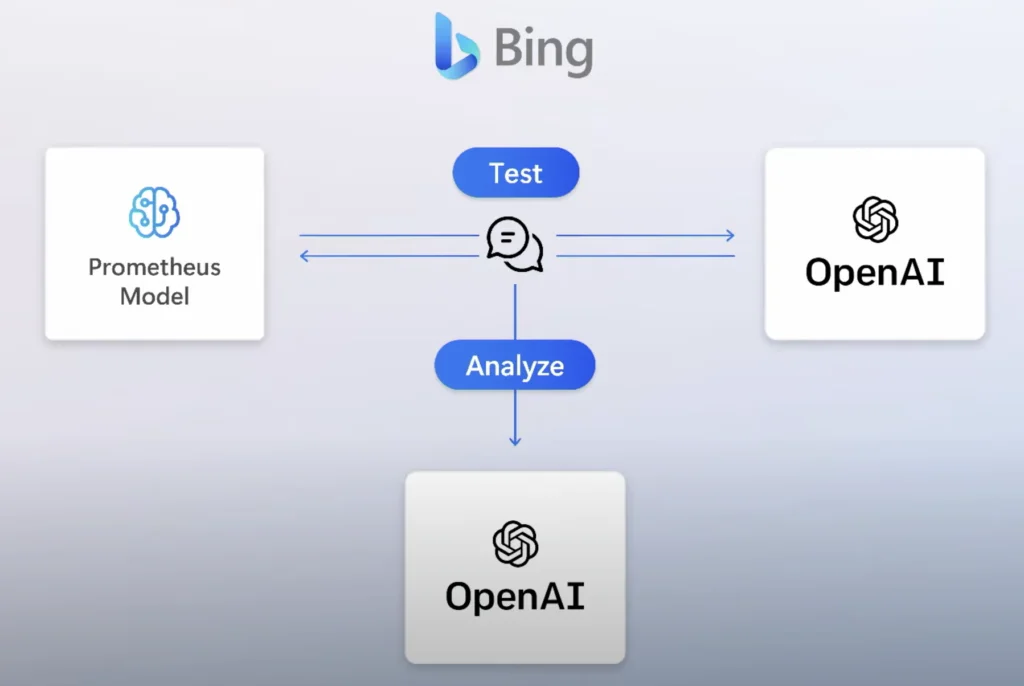

既然LLM經常produce wrong output,點解唔create一個algorithm去continuously fact-check output?呢個similar to Microsoft講嘅safety algorithm,佢哋build咗入去Prometheus,simulate bad actors嘅prompts去部machine。

人類嘅角色

呢個technology似乎仲喺early stage,雖然progress係exponential,但human嘅角色係critical。我哋仲唔可以trust output,即使有Bing & OpenAI integration。部machine可以幫我哋完成大約50%嘅desired outcome,但我哋需要put in另外50%。

似乎有enough time俾我哋去adjust、learn呢個technology嘅strengths同limitations,然後effectively咁使用佢。

至於design呢啲系統嘅engineers,你哋probably需要做好啲去highlight俾end users知道邊啲data points同sentences係machine唔sure嘅。我哋human brain鍾意走捷徑,所以我好sure我哋好多人(包括我自己)會take lazy route接受machine講嘅嘢做truth :P 要我哋100%嘅時間保持警覺好難。

你有冇catch到AI-generated answers係confidently wrong嘅?我好想聽你嘅examples——越specific越好。

祝好,

Chandler