Update (Apr 22): The follow-up came early because the pattern settled faster than expected. Read: I'm Using Claude Code for Everything Else But Coding.

Update (Apr 3): I cancelled Claude Max and committed to publish a 30-day follow-up on May 2. Read: Why I Cancelled Claude Max After 13 Months and What I’m Testing with Codex Next.

Two weeks ago I wrote about dual-wielding Codex and Claude Code. That post resonated more than anything I have written — it turns out a lot of people are running the same experiment.

At the time, my working model was clear: Claude Code for execution quality and QA, Codex for architectural reasoning and sustained plans. Both tools, different strengths, cross-model review for important work.

Two weeks later, both tools have shipped significant updates and the balance has shifted. Not dramatically — but enough to be worth writing about. (For the record, I am drafting this post in Claude Code — not out of loyalty, but because my current billing cycle is still active and I do not want to waste money already committed. That kind of practical calculation is exactly the point of this post.)

March 2026 was intense for both platforms. Codex launched plugins with integrations for Slack, Gmail, Linear, Figma, Sentry, and more — plus Triggers for automated GitHub workflows, GPT-5.4 mini and nano models, and Windows native support. Claude Code shipped Agent Teams (multi-agent orchestration, still experimental), AutoMemory, Computer Use (macOS only, Pro/Max plans), Scheduled Tasks via /loop, and about 10 releases in March alone. Both platforms are moving fast.

The Newsletter Story (Why This Is Not Just About Code)

The observation that changed my thinking had nothing to do with writing code.

My site has a full newsletter system — subscribe form, post CTAs, welcome email, daily cron, double opt-in, 13-locale support. Technically, everything works. The problem: zero verified subscribers.

I drafted a plan to fix this: extract a lead magnet PDF from my course, gate Module 1 behind email, add mid-article CTAs, hook the AI chatbot into the subscribe flow, redistribute through YouTube and LinkedIn. Seven new things.

I built this plan with Claude Code. It felt productive.

Then I gave the same brief to Codex. The pushback was immediate.

The lead magnet was redundant — Module 1 is already free. Too many surfaces at once — if you build all seven, you cannot tell which one works. The problem is not infrastructure, it is copy. "Stay in the loop" is generic. The verification email is not persuasive enough. The interest selection adds friction.

Codex's plan: fix what exists first (rewrite copy, improve the verification email, reduce friction), add one new surface (an inline blog CTA), measure with GA events before building anything else.

My plan was "build more things." Codex's plan was "make the existing things work better, then test one new thing." Mine would have taken a week with no way to know what worked. Codex's can ship in a day and tells you exactly where to invest next.

I have to admit — this caught me off guard. Not because Claude is bad at strategy. I think if I had prompted more carefully — "challenge my assumptions before executing" — I might have gotten a similar pushback. But the default reasoning style was noticeably different. GPT-5.4 defaulted to "question the premise." Claude defaulted to "execute the plan well."

That distinction matters for product decisions.

Speed and Steering

Two things I have noticed that affect daily workflow more than expected.

Speed and token efficiency: Codex with GPT-5.4 on high thinking is consistently faster than Opus 4.6 on high thinking for equivalent tasks. Third-party comparisons suggest Codex uses roughly 3x fewer tokens for similar work — one benchmark measured 1.5 million tokens on a Figma-style task where Claude used 6.2 million. Claude "thinks out loud" more, which produces higher-quality reasoning but burns through limits faster. Starting around Mar 20, Opus seems to be making more tool calls than usual — more intermediate steps before reaching the answer. I do not know if that is a model change or a coincidence, but it is perceptible.

Real-time steering: When I send a new message while the tool is working — "wait, not that direction, try this instead" — Codex reads it almost immediately and adjusts. Claude Code tends to finish its current execution before reading the correction.

That sounds small. It is not. When you are watching an agent go down the wrong path and you want to course-correct, the lag between "reads your correction now" and "reads it after finishing the current operation" compounds across a full work session.

The SSE Bug: A Concrete Example

I was building a new iOS app. Claude Code had produced 40 Swift files across all features — auth, agents, chat, frameworks, dashboard, profile. Impressive breadth. But one critical bug remained: SSE streaming for real-time chat would not work.

The backend was fine. Curl worked. But URLSessionDataDelegate.didReceive(data:) would not fire in the Swift client. Claude Code worked on this for hours. Multiple approaches, multiple debugging sessions.

I gave the same problem to Codex. A few attempts later: commit 7f592152 — "fix(ios): restore real-time chat streaming."

Is this representative? Maybe not. Every tool has good days and bad days. But from my experience, when Claude Code gets stuck in a debugging loop — trying increasingly clever variations of the same approach — switching to Codex often breaks the deadlock because GPT-5.4 frames the problem differently from the start.

Where Claude Code Still Wins

It would be easy to read this post and conclude that Codex is pulling ahead across the board. That would be wrong. Claude Code shipped hard this month too, and several of its advantages have actually grown.

Agent Teams. This launched in February and has been maturing through March. Multiple Claude Code instances working in parallel — an explorer, a code reviewer, an implementer, a test runner — with dependency tracking and shared task lists. It is still experimental and disabled by default, but when enabled, it is genuinely impressive. Codex has multi-agent support too (tasks run in isolated cloud containers), but Claude Code's Agent Teams feel more coordinated. For large refactors touching many files, Agent Teams are currently the better experience.

AutoMemory. Claude Code now automatically writes memory rules based on your habits. After a few sessions, it knows your project structure, your naming conventions, your preferences. It is subtle but the cumulative effect is that Claude Code sessions get more productive over time in a way that Codex sessions currently do not.

Frontend design. Claude Code with the /frontend-design plugin still produces noticeably more polished, design-system-aware UI than Codex with the equivalent skill. I tested this directly during a site redesign on Mar 26. The Claude output had better spatial composition, more consistent styling, and a more cohesive result. This might be a harness advantage (Claude's plugin system runs the skill with more context), but the practical result is clear.

Code quality. A community analysis of 500+ developer comments on Reddit found that developers preferred Claude Code's output in about 67% of blind comparisons — noting cleaner, more idiomatic, better-structured code. That matches my experience. When the code needs to be maintainable, not just functional, Claude Code has an edge.

Automatic QA. Still the killer feature. After completing work, Claude Code automatically dispatches review agents — code review, consistency checks, gap analysis — without me asking. Codex does not do this yet. For anything where correctness matters more than speed, this alone keeps Claude Code in the workflow.

The Reliability Question

I want to share something that most comparison posts avoid.

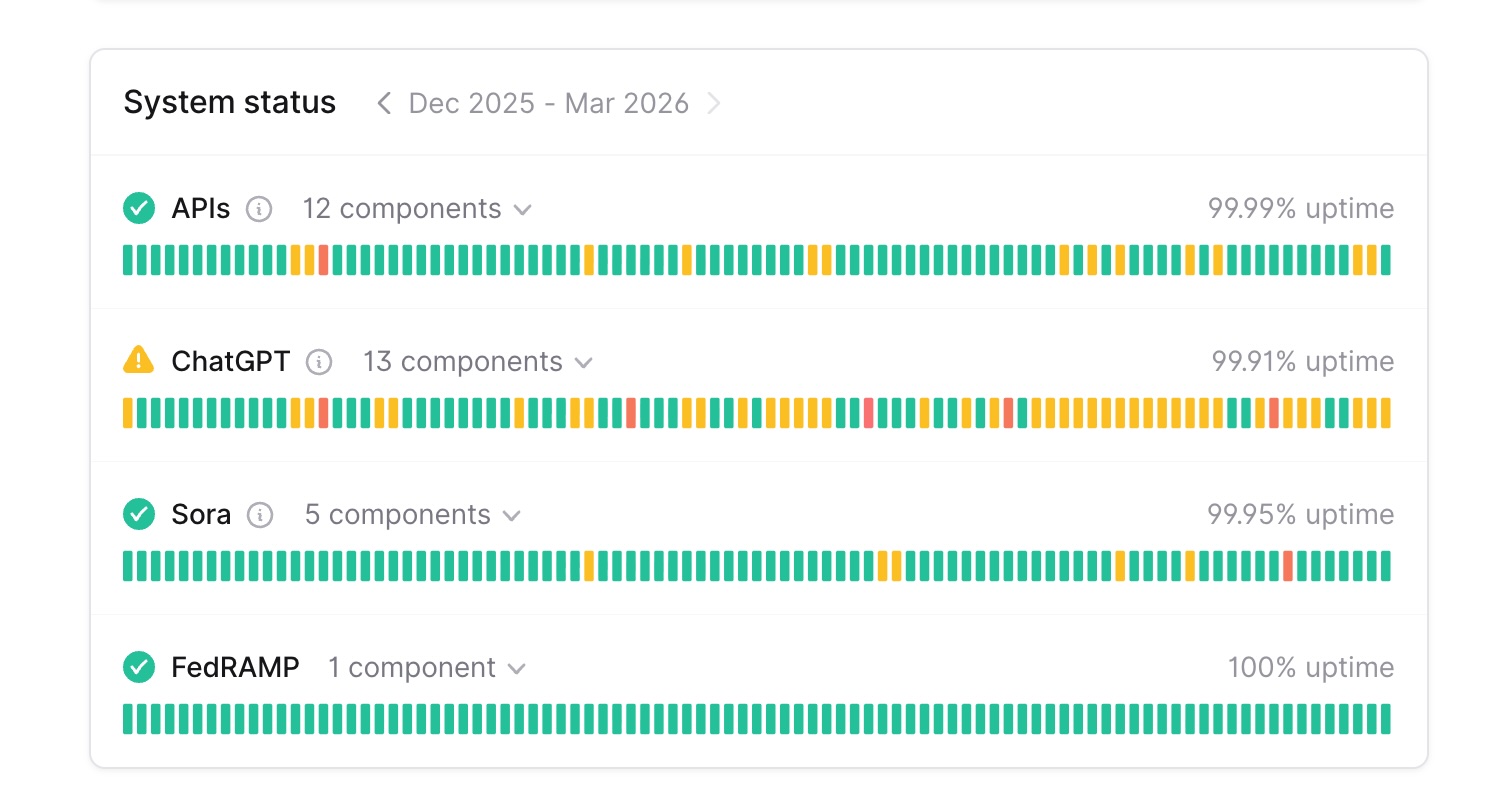

Here are the 90-day uptime numbers from both status pages as of late March 2026:

| Service | Anthropic | OpenAI |

|---|---|---|

| Main platform | claude.ai: 99.16% | ChatGPT: 99.91% |

| API | api.anthropic.com: 99.24% | APIs: 99.99% |

| Developer tools | Claude Code: 99.48% | — |

| Console | platform.claude.com: 99.41% | — |

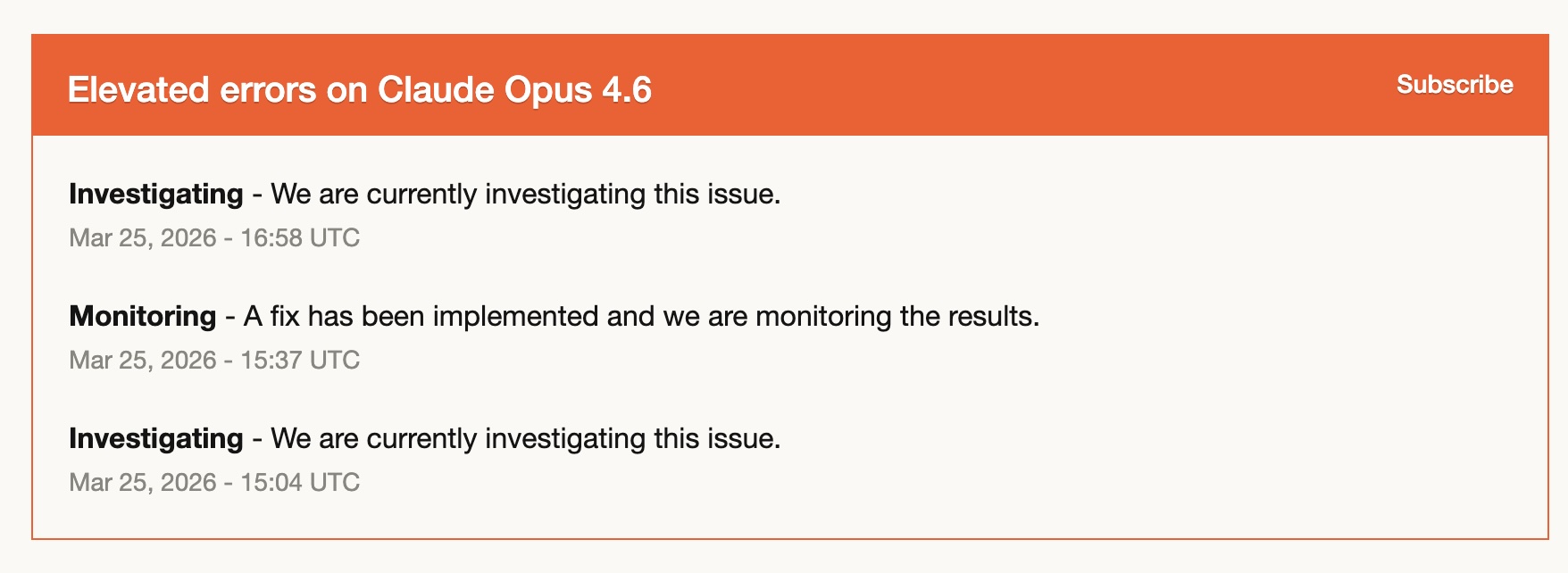

The gap is real. Over 90 days, Anthropic's services experienced roughly 8-10x more downtime than OpenAI's. On Mar 25, there was a specific incident — "Elevated errors on Claude Opus 4.6" — with an investigating-fix-investigating cycle that lasted almost two hours.

In fairness, this is not the full picture. Reliability is not just uptime. BeyondTrust's Phantom Labs publicly disclosed a command injection vulnerability in Codex that could have exposed GitHub authentication tokens through branch name manipulation. The flaw affected the web UI, CLI, SDK, and IDE integrations — a user-controllable branch name was passed directly into a shell command without sanitization. OpenAI patched it, but it is a reminder that stability and security are different dimensions of reliability, and both matter.

I am sharing the uptime data not to bash Anthropic. I use Claude Code every day and it remains excellent. But for anyone building their professional workflow around these tools, the numbers are worth knowing. And this is exactly why dual-wielding is not just nice to have — when one service has a bad afternoon, you switch and keep working. I have done this three times in two weeks.

The Plugin Gap Is Closing

In my original post, I noted that Claude Code's plugin ecosystem was more mature. That was true two weeks ago. It is less true today.

Codex launched its plugin system on Mar 27 with integrations for Slack, Gmail, Google Drive, Linear, Figma, Sentry, Notion, and Hugging Face. Plus skills, hooks (including SessionStart and UserPromptSubmit events), MCP servers, and a plugin directory in both the app and CLI.

The feature set is converging. Both tools now have: plugins/skills for reusable workflows, hooks for event-driven automation, MCP server integration, and app-level integrations with external services.

Where Claude Code still leads: the existing plugin ecosystem is deeper. Plugins like Superpowers (structured planning), /feature-dev (guided development), and /frontend-design have been refined over months. Codex's plugin directory is newer and the individual plugins are less battle-tested.

Where Codex is pulling ahead: Triggers. Codex can auto-respond to GitHub events — an issue arrives, Codex auto-fixes it, opens a PR. That is a new category of automation that Claude Code does not offer yet. For teams that want autonomous engineering workflows, Triggers are a significant differentiator.

My Updated Working Model

Two weeks ago, I split work roughly 60/40 Claude Code/Codex. I had a clear mental model: reach for Claude Code when you need quality, reach for Codex when you need architectural reasoning.

That neat split has dissolved. I now use both throughout the day, switching based on feel more than rules. Codex for one task, Claude Code for the next, sometimes both reviewing the same plan. The tools are close enough in capability that the "which one should I use for this?" question matters less than it did two weeks ago.

What changed is the economics.

OpenAI's Plus plan is $20/month with increasingly generous limits. I have found myself reaching for Codex more and more — not because it is dramatically better at any one thing, but because the combination of speed, token efficiency, and that $20 price point removes friction. There is no mental calculation of "is this task worth burning Claude Code tokens on?"

I am leaning toward reducing my Claude Code plan from the $200/month Max tier to the $100/month plan, possibly even the $20/month Pro plan. Two weeks ago, that would have felt risky. Now it feels practical. The work I need Claude Code to be excellent at — frontend design, Agent Teams orchestration, the automatic QA that catches things I would miss — those are real advantages. But they may not require $200/month if Codex handles half my workload at $20.

I am aware this bet has risks. The $20 Claude Code tier has real usage limits — if I hit them during a critical session, I will regret the downgrade. And OpenAI's generous $20 limits are likely a market-share play that may not last forever. But right now, the economics favor dual-wielding.

The total cost ($20 Codex + $100 or even $20 Claude Code) would be less than what I was paying for Claude Code alone. And the combined output is better than either tool solo at any price.

That is maybe the most practical takeaway from two weeks of dual-wielding: the competition is not just making the tools better. It is making them cheaper. And cheaper means you can afford both.

What I Expect Next

Both platforms are accelerating. Codex just launched plugins, triggers, and a Windows client. Claude Code just shipped Agent Teams, AutoMemory, Computer Use, and Scheduled Tasks. Neither is standing still.

A recurring theme in developer communities on Reddit — and I think it captures something real — is that "Claude Code is higher quality but you hit limits. Codex is slightly lower quality but more usable day-to-day." The balance shifts as both improve.

My advice remains the same as the first post, but stronger now: try the other tool for a week. Not to switch — to add. The cross-model review workflow is still the best discovery I have made. And the operational resilience of having two tools you trust will save you on the day one of them goes down.

As a user, this is the best possible situation. Two excellent tools getting better fast, each pushing the other forward. The pace of competition is so fierce that I do not think any company can stay comfortably ahead for long — which is exactly why betting on one tool feels increasingly risky and betting on the workflow (dual-wielding, cross-model review) feels increasingly right.

Frequently Asked Questions

Has your opinion changed since the first post?

The core thesis — dual-wielding beats picking a winner — has only gotten stronger. What changed is the split (60/40 became 50/50) and the reasons. Codex's strength in strategic reasoning surprised me more than its coding improvements.

Is Codex faster than Claude Code?

On high thinking, yes — consistently faster, and third-party comparisons suggest it uses roughly 3x fewer tokens for equivalent tasks. On default thinking, the gap is smaller. For iterative work where you go back and forth frequently, the speed and token efficiency add up.

Should I worry about Claude Code's uptime?

The 90-day numbers show a real gap (99.2% vs 99.9%). If Claude Code is your only tool and you are working against a deadline, have a backup plan. But Anthropic shipped about 10 Claude Code releases in March alone — they are iterating fast on features even if reliability is trailing OpenAI.

What about the Codex security vulnerability?

A command injection flaw in Codex could have exposed GitHub tokens through branch names. It was discovered and addressed. Worth knowing, but also worth noting that security researchers actively test these tools — which is a good thing for the ecosystem.

Is the newsletter strategy story really about the tools?

Partly. Different models have different default reasoning styles. GPT-5.4 was more likely to challenge my assumptions. Claude was more likely to help execute my plan well. Both are useful — but for product strategy, "are you solving the right problem?" is often more valuable than "here is a good implementation."

Which tool should I buy?

Both. That is not a cop-out — it is genuinely the best answer. Codex at $20/month plus Claude Code at $20-100/month gives you better results than either tool alone at any price. I am leaning toward dropping from $200/month on Claude Code to $100 or even $20, and adding Codex at $20. The total cost goes down and the output goes up. That said, OpenAI's generous limits may not last — so stay flexible.

That's it from me. If you have been running your own dual-wielding experiment, I would genuinely like to hear how your split is evolving. Same patterns, or something completely different?

Cheers, Chandler