On April 3, 2026, I said I would come back in 30 days and tell the truth about what happened after cancelling Claude Max.

I am showing up eleven days early, not because I am impatient, but because the pattern has already settled and waiting until May 2 would mainly be theatre.

The punchline sounds wrong at first. A tool called Claude Code is now the tool I use for almost everything except code.

The short version is this:

- Codex with GPT-5.4 on xHigh thinking has taken the coding seat.

- Claude Code with Opus 4.7 on xHigh has taken every other seat at my desk.

- The cheap-Codex story broke the moment real limits showed up.

If you want the cleanest thesis, it is this:

Codex-GPT-5.4 on xHigh for anything coding related. Claude Code with Opus 4.7 on xHigh for everything else.

It is a sharper, more expensive, and more psychologically revealing split than I expected nineteen days ago.

What broke first was not code quality

When I wrote the cancellation post, the attractive story was simple:

- Claude Max: $200/month

- Codex / ChatGPT: $20-25/month

- execution quality gap shrinking fast

- OpenAI reliability looking stronger

That looked like an easy downgrade.

It was not.

The first thing that broke was not architecture, plan quality, or execution discipline.

It was the limits story.

My real timeline looked more like this:

- Apr 6: I signed up for two ChatGPT Business seats at $25/month each on monthly billing.

- Apr 6: I was already thinking in workaround terms. To keep roughly the same coding pace, I suspected I might need about three Codex-capable accounts.

- Apr 10: After a few days of trying to live in that setup, I had enough evidence to say it out loud: three cheap accounts still did not recreate what the Claude Max plan had given me at its best.

- Apr 12: I bought ChatGPT Pro at $100/month because the more I used Codex for pure coding work, the more I wanted more of GPT-5.4 at high and xHigh reasoning, not less.

That is the first honest correction to the Apr 3 post.

The replacement was not "$200 Claude Max becomes $20 Codex."

It was closer to:

- "I do not need the same Anthropic plan I used to need."

- "I do need more Codex capacity than the cheap tier gives me."

- "The real comparison is workflow quality under actual limits, not benchmark screenshots."

If you build your whole decision on today's $20 economics, you should at least admit that pricing and limits can change quickly.

Codex took the coding seat

The biggest thing I learned is that GPT-5.4 on xHigh thinking feels like a coding specialist.

Not a charismatic one.

Not a warm one.

Not especially interested in becoming your friend.

But a specialist.

There is a bareness to Codex I ended up liking more than I expected. It feels like a no-nonsense engineer: careful, methodical, and not trying to perform personality at you. That matters more than I expected during long workdays.

The clearest moment for me was Apr 17. I gave Codex-GPT-5.4 on xHigh an agreed plan and let it work. It ran continuously for thirty to forty-five minutes on a single session, following the plan closely, writing and executing unit tests, integration tests, and browser tests locally, without me needing to sit on top of it the whole time.

I have not had another tool do that for me with the same consistency.

That changed how I think about Codex. It is not just "good enough on small tasks." It can execute a clear plan for a long stretch with discipline.

For pure coding work, I now rely on Codex almost all the time:

- architecture

- implementation

- debugging

- eval design

- test framing

- pipeline cleanup

- production-minded product logic

The best April example is Prova.

Most of the useful work in Prova was not glamorous. It was:

- evaluating composable sprints

- tightening onboarding logic

- finding contradictions between roadmap generation and assigned sprint state

- improving the product based on real user rows and actual production behavior

That kind of work turns out to fit Codex very well.

It is competent, thorough, and surprisingly sharp when the job is:

- identify the contradiction

- isolate the logic bug

- separate correctness from RFC territory

- recommend the smallest defensible next move

One of the clearest examples came out of a single Prova production row for a builder-intent user.

The system had:

- labeled a builder-intent user as

marketing_vp - assigned

table-stakes-diagnostic - generated a roadmap that started later in the journey

- shown a Context Check card immediately after onboarding

What made that episode useful was the cross-review loop, not just the diagnosis.

Opus 4.7 got me to the first three issues:

- one track-calculation bug

- one timing bug

- one builder-opener / RFC question

Then GPT-5.4 pushed the analysis further and caught the contradiction Opus had missed:

- the assigned first sprint and the generated roadmap were already telling the same user two different truths

That mattered. It turned the conversation from "should we ship the Builder RFC now?" into "fix the live contradiction first, then revisit the RFC."

It did not feel like a model trying to impress me. It felt like a senior SWE saying: fix the current contradiction first, then talk about the product theory.

That pattern repeated enough times this month that I no longer think of Codex as "the cheaper alternative." I think of it as my primary coding instrument.

Claude Code took every other seat

If the story stopped there, the answer would be simple: switch to Codex and move on.

That is not what happened.

Claude Code with Opus 4.7 is still better for work where the output depends on taste, or where the loop is long, iterative, and context-heavy.

That is where the title stops sounding contradictory and starts sounding literal. Claude Code, the CLI I used to reach for first when it was time to write code, is now the tool I reach for first when the work is anything except code.

By everything else I mean:

- writing that sounds like me

- iterating on structure and rhythm

- naming and positioning

- taglines and brand phrasing

- image prompts for blog post covers

- logo and identity exploration

- stronger

/frontend-designpasses when the work needs visual judgment instead of just functional UI - long research threads where I am building up a point of view across several sessions

From my experience over the last nineteen days, this has stayed true repeatedly.

The gap is clearest when there is a feedback loop. Both Claude and Codex can read historical posts, inspect past prompts, and use existing work as reference. But when I am doing multiple rounds of refinement with feedback after each attempt, Claude still tracks the direction I want more reliably across writing, image prompting, and naming.

The clearest product example is Prova. I did not land on that name with Codex. GPT-5.4 gives me solid, competent answers. Opus gave me stronger creative range.

The same pattern shows up in site design too.

The /frontend-design workflow under Superpowers still works better for me on Opus than on GPT-5.4 when the work is design-led rather than engineering-led. Codex gives me something functional. Claude more often gives me something I would actually want to ship with pride.

So if the question is:

"Did Codex replace Claude for everything?"

No.

It replaced Claude for the coding seat. It did not replace Claude for everything else.

That distinction matters.

Familiarity was real, and so was withdrawal

I had been using Claude Code for about 13 months.

That length of time changes your body language, not just your preference list.

When I switched over to Codex more aggressively, I noticed a kind of withdrawal effect.

Not because Codex was bad.

Because it was different.

The friction points were small, but persistent:

- certain permission confirmations

- the feeling that it asked one question too many in some moments

- the sterile tone of the responses

- the lack of that familiar Claude "voice" in the loop

These are not objective defects. They are workflow differences, and familiarity changes how you experience them.

I do not want to pretend I navigated that transition like a pure rational economist. I did not.

At one point I caught myself running work through Claude as a safety-net review pass, not because I needed the second opinion, but because the pull was stronger than my own "I am switching cleanly" narrative.

What it taught me is that the real dependency was not just on Claude's raw capability. It was on the feeling of safety that came from having another intelligent system in the loop that I trusted differently.

That is why the final answer for me was never going to be "pick one forever." The psychology of the workflow matters too.

Codex has its own failure modes

This stretch was not one long string of Codex victories. I hit real issues too.

The first one that felt like a true Codex-specific problem happened on Apr 14. Across two terminal sessions I hit this error:

{

"error": {

"message": "Unknown parameter: 'prompt_cache_retention'.",

"type": "invalid_request_error",

"param": "prompt_cache_retention",

"code": "unknown_parameter"

}

}

That kind of thing matters because it breaks trust at the harness layer, not the reasoning layer.

I also noticed that Codex seems stricter about what the model is allowed to do around deployment or touching production, even after approvals had already been granted earlier in a session.

Sometimes that is good.

Sometimes it is annoying.

But it is definitely part of the real user experience.

One small example I genuinely prefer on the Codex side: checking status and limits without interrupting the main flow. Being able to run /status without needing to wait for the current work to finish is a small thing, but over nineteen days those small ergonomics add up.

That is what I mean when I say the tools are not just different in output quality. They are different in harness feel.

Reliability still matters, and April did not help Claude's case

One of the reasons I felt comfortable making the switch in the first place was reliability.

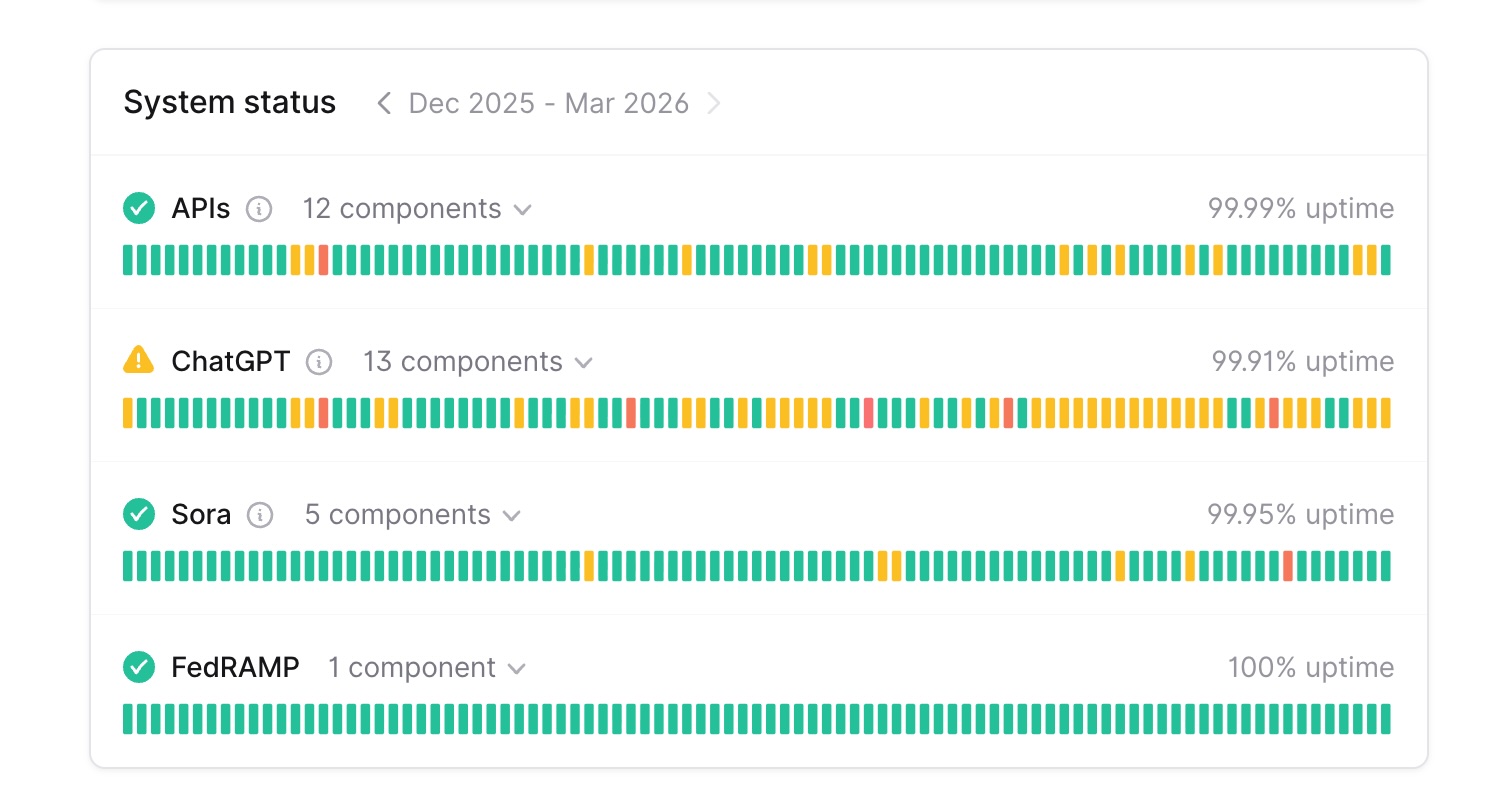

I had already written about the 90-day status difference between Anthropic and OpenAI in the Mar 31 follow-up. The broad picture still matters:

And then April added another real-world reminder.

On Apr 15, Claude had elevated errors again across Claude.ai, the API, and Claude Code. Login was affected. The API recovered first. Claude Code users who were already logged in could still work, but login itself was broken for a while.

That does not erase Claude's strengths.

It does strengthen the practical case for not staking your entire workflow on one vendor.

This is still one of the most durable lessons from the whole experiment:

Dual-wielding is not just a luxury. It is operational resilience.

When one provider has a bad afternoon, you keep shipping.

Opus 4.7 sharpened the pattern, it did not flip it

Claude Opus 4.7 dropped on Apr 16. Publishing any kind of honest verdict without running it seriously would not be fair.

So I put it against a real Prova coding diagnostic almost immediately. Opus 4.7 gave me a solid first pass: the track bug, the mistimed Context Check card, and the broader Builder-opener question.

I then ran that diagnosis back through GPT-5.4 as a critique pass. GPT-5.4 caught the sharper contradiction Opus had missed: the product was already assigning one first sprint while generating a roadmap that started somewhere else. That was not just a Builder-fit issue. It was a live correctness problem.

When I fed that revised diagnosis back through Opus, it converged.

The surprise came in the other direction.

On Apr 19, I noticed something I had not expected: for simpler tasks — a short code review pass, a focused execution against a small change — Opus 4.7 on xHigh is noticeably slower than Codex-GPT-5.4 on xHigh. I had expected the opposite.

That one detail re-anchored my pattern.

Where Opus still clearly wins for me is the taste-heavy, iterative, long-context work I described above. Where Codex clearly wins is deep coding diagnosis and the fast, everyday coding turns I used to reach for Claude on.

So the honest version is:

- Opus 4.7 can still give me a strong first pass on deeper coding analysis

- On lighter coding turns, it currently feels slower than GPT-5.4 at the same thinking level

- Combined with the Apr 17 continuous-execution experience, that pushed the coding seat decisively toward Codex

Where I actually landed

If you strip away the drama, the pricing screenshots, the outage screenshots, and the social-post framing, as of Apr 22 my working pattern has settled:

For anything coding-related

I reach for Codex with GPT-5.4 on xHigh thinking first.

Why:

- it feels specialized

- it is methodical

- it is careful

- it handles architecture and execution well

- it can run a full agreed plan with unit, integration, and browser tests for thirty to forty-five minutes at a stretch

- on everyday coding turns it is faster than Opus 4.7 on xHigh at the moment

- it is easier for me to trust on deep coding work now

For everything else

I reach for Claude Code with Opus 4.7 on xHigh thinking.

That now covers:

- writing that has to sound like me

- image prompts for cover art

- naming, positioning, and brand language

/frontend-designpasses that need visual judgment- long research threads where I am building a point of view across sessions

- reviewing my own plans before handing them to Codex

Why:

- it follows my style better

- it handles feedback-driven creative iteration better

- it is stronger on brand taste and design taste

- it is still the model I trust most when the job is judgment, not execution

For important coding plans

I still like the cross-review loop:

- get a plan from one

- have the other critique it

- then tighten from there

That workflow still feels more robust than betting on one model's first answer, no matter how good that model is. The builder-intent row I walked through above is exactly why I still like this setup.

For pricing

I did not end up with the simplistic cheap switch.

I ended up here:

- not wanting the old $200 Claude Max structure

- wanting more Codex capacity, not less

- accepting ChatGPT Pro at $100 as the more realistic coding-plan answer

- still keeping Claude in the loop for everything that is not code

For shipping

The whole point of the switch was to keep shipping. This was not nineteen days of benchmark theatre. In the same window, Prova's Operator/Builder split went live with a real Builder execution lane, two substantial blog posts went out, and I ran a full translation pass across twelve locales. The experiment did not cost me output.

If you want the most compressed version:

I cancelled Claude Max, but I did not eliminate Claude Code from my work. I moved Claude Code out of the primary coding seat and gave it the rest of the desk.

That is the truest summary I can give you.

So: would I make the same decision again?

Yes.

But I would describe it differently now.

On Apr 3, the framing was:

"I cancelled Claude Max and I am testing whether Codex can replace it."

On Apr 22, the framing is closer to this:

"I cancelled Claude Max, found that Codex is the coding specialist I trust most right now, and now use Claude Code for everything else at my desk — where it is still the best tool I have."

That answer is less binary, more expensive than the simple social-post version, and much closer to the truth.

Frequently asked questions

Did Codex actually replace Claude for coding?

For me, yes. On pure coding work, Codex with GPT-5.4 on xHigh thinking has become my default seat. Opus 4.7 on xHigh can still give me a useful first pass on deeper diagnosis, but it is no longer in the primary coding seat for me, and it feels slower than Codex on lighter coding turns right now.

Did the cheap-Codex story hold up?

Not really. The moment I used it at actual volume, the low-tier economics stopped being the full story. I moved to a higher-paid OpenAI plan because I wanted more capacity, not because the experiment failed.

Do you still need Claude?

Yes, if your work includes writing, design, naming, image prompting, long research threads, or any other taste-heavy or judgment-heavy loop. Opus 4.7 on xHigh has become the default for everything that is not code in my workflow.

What about reliability?

The reliability gap still matters. April reinforced that. The answer is not panic. The answer is backup coverage.

Why publish eleven days early?

Because the pattern settled. Holding the post until May 2 to honour the exact number in the original headline would have been theatre. The honest thing was to say what I now actually do and move on.

So which plan would you buy today?

If your job is mostly coding, I would look seriously at Codex / GPT-5.4 first. If your work spans coding plus a lot of taste-heavy creative work, I would not want to be without Claude. And if $100 a month is out of reach right now, the $20 Codex tier still works for smaller individual projects — just expect to hit limits faster under sustained multi-project work. My own answer right now is not a single-tool answer.

That is the honest nineteen-day update.

Codex took the coding seat.

Claude Code took every other seat.

Which is why a tool literally called Claude Code is now the tool I reach for when the work is everything except code.

And the real lesson, again, is that the workflow matters more than the fandom.

If you ran a similar switch this month, I would genuinely like to know where you landed. Did one tool win outright for you, or did your split get sharper too?

Cheers,

Chandler