The Archive · 2013 — Now

Notes from the practice.

Write to figure out what you actually think. The post is the second draft of a thought you had earlier in the day.

516 essays in the archive · 13 languages · 54 categories

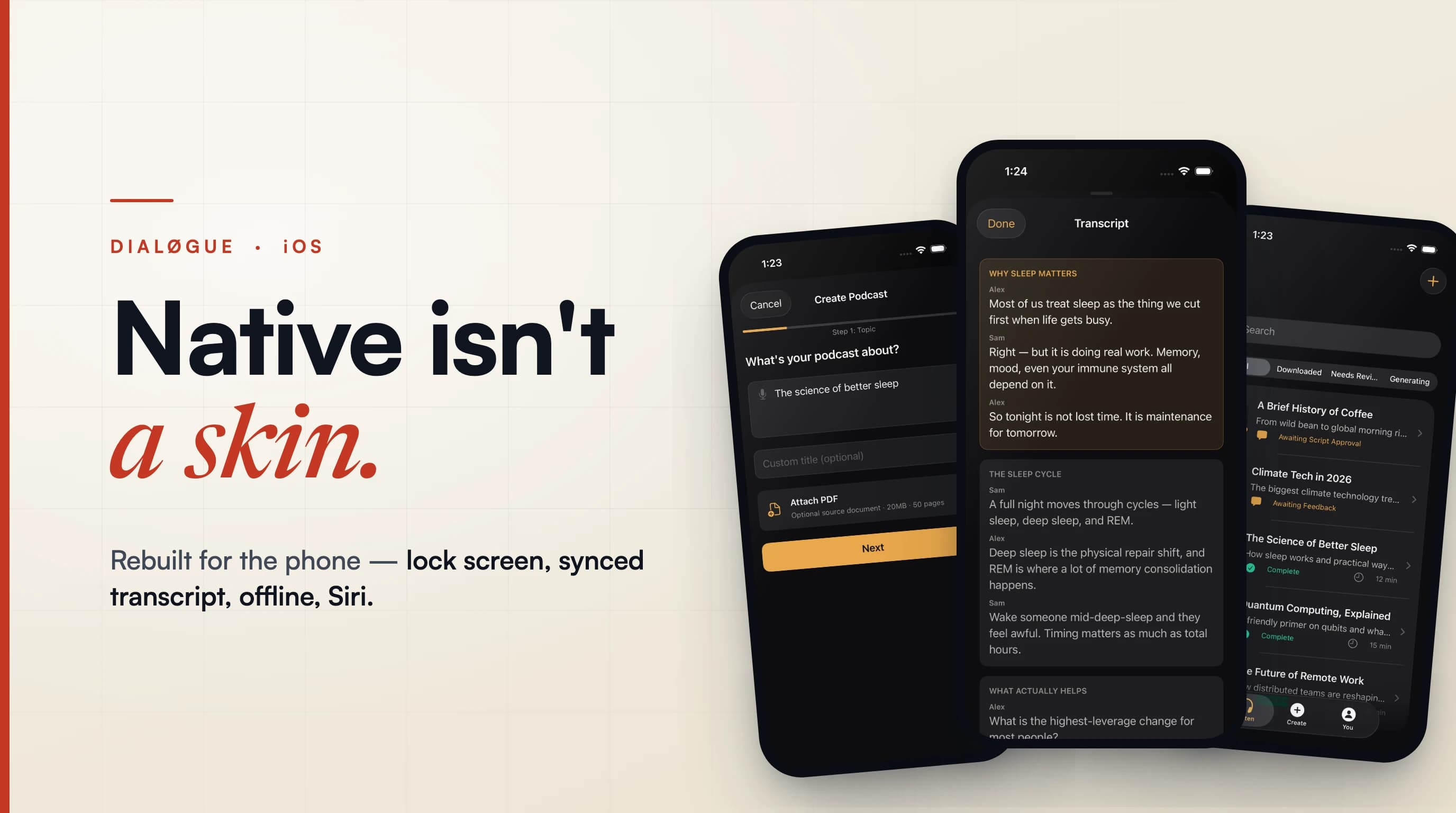

Native Isn't a Skin: Rebuilding DIALØGUE's iOS App

I shipped DIALØGUE's iOS app as a port of the web product, then rebuilt it natively — three tabs, lock-screen audio, a synced transcript, resilient offline, and Siri — because a web app shrunk to a phone is still a web app.

Read the analysis

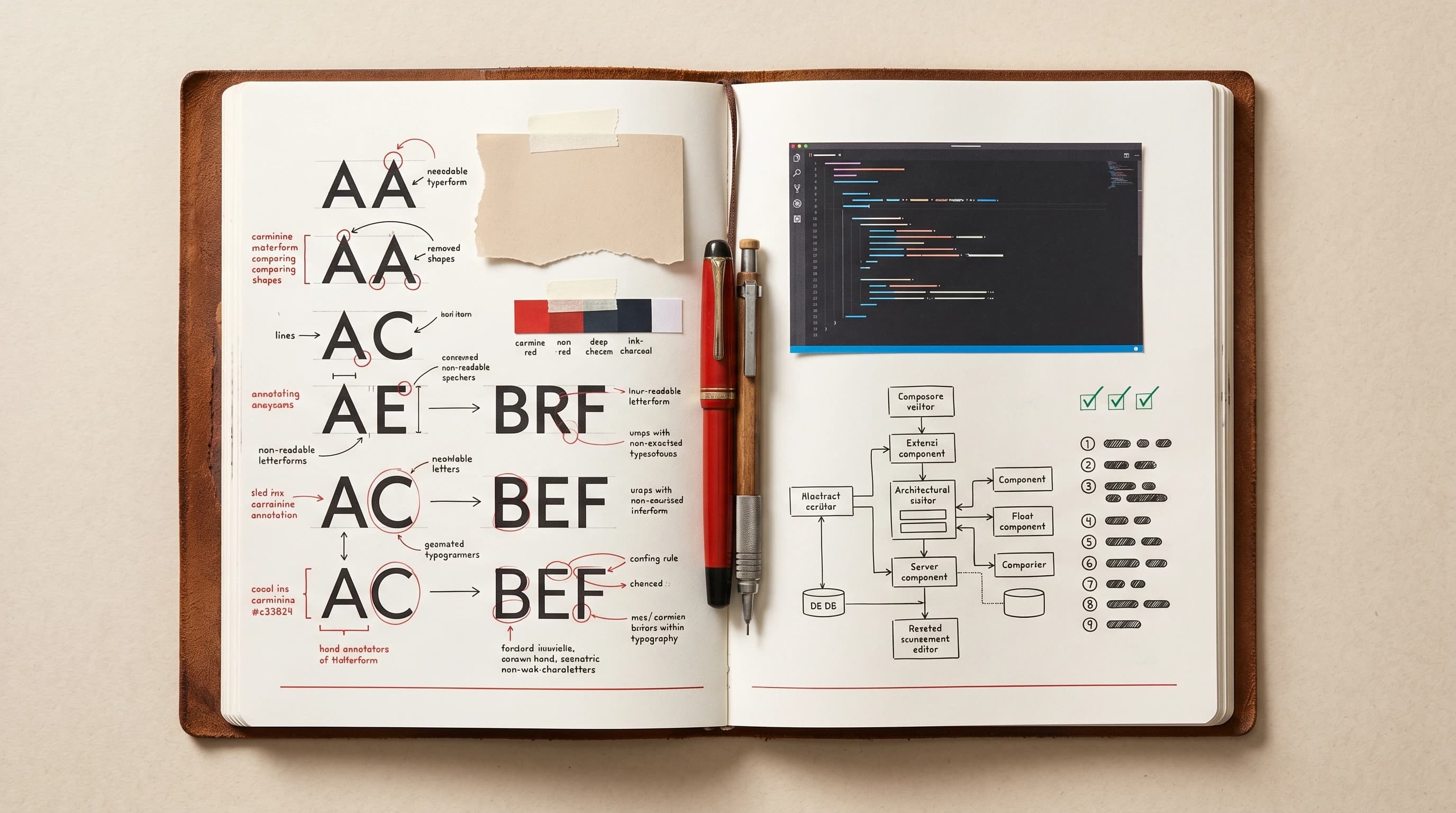

I Rebuilt My Site With Two AI Models: Opus for Design, Codex for Execution

TRANSMISSION served its purpose. Four and a half months later, time to move on. Over the Memorial Day long weekend I rebuilt the site, and the more useful story is the workflow: Claude Opus 4.7 did the design judgment, Codex on GPT-5.5 did the execution, and the /goal function let Codex run autonomously for close to four hours at a stretch.

What Publicis Is Really Buying for $2.2B: Notes on the LiveRamp Deal

Publicis has agreed to acquire LiveRamp for about $2.2B. I do not think the interesting question is whether this replaces the walled gardens or saves the open web. It does not. The better question is what advertisers still need outside closed ecosystems.

The Full Index

Earlier entries

Sort: Newest

6 Months, 3 Game Projects, 0 Shipped

No. 05AII Rolled Back 2,724 Lines After One AI Audio Change Broke Production

No. 06AII'm Using Claude Code for Everything Else But Coding

No. 07AITwo years after my 7 Andrew Ng courses: the 2026 path I'd actually take

No. 08AIThe Code Was the Easy Part of Shipping Prova

No. 09AIWhat Agencies Actually Need From AI Is Not More Content

No. 10AII Tried to Clip My Course into a YouTube Video. Here's Why I Rebuilt It Instead.

No. 11AIWhy I Cancelled Claude Max After 13 Months and What I’m Testing with Codex Next

No. 12AII'm Dropping My $200 Claude Code Plan After Two Weeks with Codex

Subscribe

Get the next useful note when there is something to say

I write about AI, work, expat life, and building products. Pick the topics you care about, and I'll send the next one when it's worth your time.