將 Prova 真正整出嚟,code 反而係容易嗰部分

當 Prova 開始對 progression、billing、auth 同 state 作出 real promises, 我先覺得佢真係開始成為一個 product。最難嘅部分唔係 generate code,而係 build 起圍住呢啲 promises 嘅 contracts。

當 Prova 開始唔再容許我自己呃自己嗰一刻,我先第一次覺得佢真係開始變成一個 real product。

Homepage 可以好長時間都仲停留喺 aspirational 嗰種狀態。

但 live product 唔得。

當 people 可以 signup、confirm email、跌入錯嘅 state、撞到 billing、submit work,仲期待 system 會記得之前發生過乜,bottleneck 就會改變。

Product 開始作出 real promises,而 invisible work 會一下子變得好 visible。

2026 年 4 月 16 日更新:自從我第一版寫呢篇文之後,Prova 已經向一個更清晰嘅 Operator/Builder split 再行前咗一步。主線 thesis 我冇改,但下面啲同產品相關嘅具體細節,我更新咗返去對得返依家 live 嗰個樣。

如果你之前有睇我喺 3 月同 4 月初寫嗰三篇由 Claude Max 轉去 Codex 嘅文章,你會知道嗰個 experiment 仍然進行中。我已經公開講過,會喺 May 2, 2026 出一篇 proper follow-up。呢篇唔係嗰篇。

如果你未聽過 Prova:佢係我做俾 marketers 同 advertising professionals 嘅 coaching product,分開一條 Operator path 做 workflow redesign,同一條 Builder path 做第一個有用 slice。

最難嘅,喺我今次經驗入面,唔係叫 LLM generate code,而係 code 四周嗰啲嘢。

如果你而家都喺用 AI build 嘢,而且件事涉及 real users、real money,或者 real product state,我估呢個就係最少人提醒你嘅部分。

Naming 係容易嗰部分

我都要承認,替 Prova 命名係 easy 嘅決定。Prova 即係 proof 或者 test,正正啱呢個 product 嘅 job:迫人 prove 自己做嘅 work,而唔係氹佢哋 feel like builder。

Hard part 係之後先開始。當 Prova 唔再只係一個名、一個 landing page,佢就開始要求所有 real products 都會要求嘅 unglamorous work:

- assessment logic

- onboarding state

- authored progression

- review visibility

- billing flows

- auth hardening

- content publishing

- release gates

呢件事改變咗我點睇 AI tools,亦改變咗我點睇 product building。

Prova 其實要變成乜

Prova 而家係一個 structured AI builder program,俾 marketers 同 advertising professionals 由「用 AI tools」走去「build real workflows、pilots 同 operating systems」。講實際少少,即係:assessment 同 onboarding、sprint-first 而唔係 chat-first 嘅 product、review-driven progression、durable product state,以及一個比較似 office hours、圍繞 current sprint 運作,而唔係 generic AI chat box 嘅 mentor layer。

講清楚先,我唔係話 Prova 已經「done」。如果我可以咁講然後 move on,咁當然好輕鬆,但咁樣會太 convenient,而且大概亦唔真。依家更準確嘅 framing 係 soft launch:core production system 已經 live 同 working,但仲有 intentional launch-readiness work 係清單上面,包括 billing portal return-path cleanup 同 fuller MFA automation coverage。老實講,我覺得正正因為咁,呢個 lesson 先更加有用。現實世界嘅 product building 就係咁。未完成,但唔假。只係已經真到,下一個 mistake 開始會有代價。

我 generate 唔到嗰啲工作

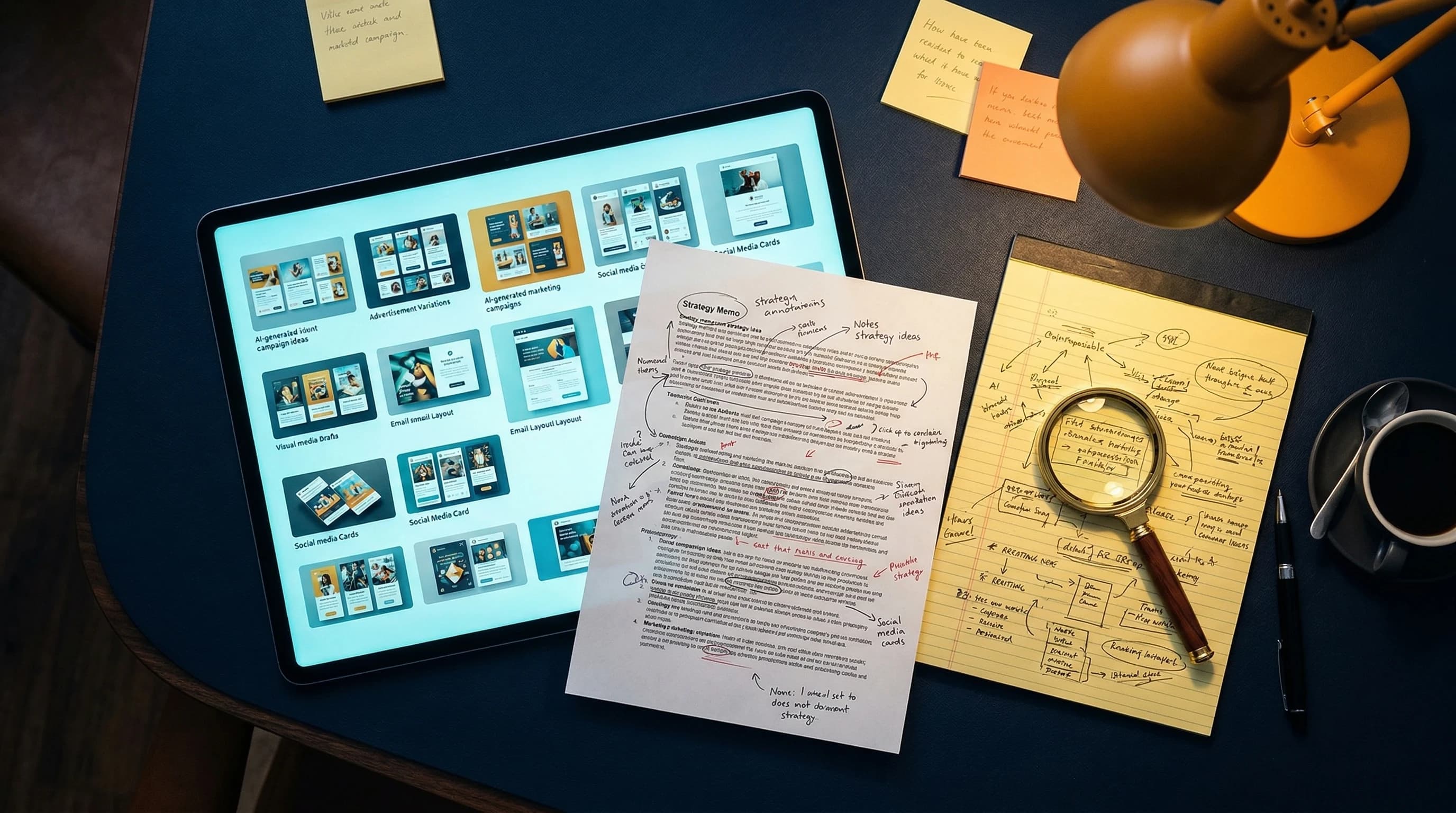

AI product 最容易整出嚟嘅版本,一向都係 demo version。

你可以好快令佢睇落好似 alive:

- 一個 homepage

- 一個 chat box

- 幾張好靚嘅 screenshots

- 一句幾有說服力嘅 product sentence

如果你想整一個 launch teaser,嗰個版本係 useful。

但如果你想理解 real work 喺邊,嗰個版本就唔夠。

對我嚟講,real work 係喺六個地方開始浮出嚟。

1. Sprint system 要停止扮有結構

我唔想 Prova 變成一個喺 landing page 睇落好 structured,但一入去就即刻 generic 嘅 product。

Fixed authored sprints 喺某一段時間係 useful 嘅。

但真正嘅 problem 係:當一個 learner pass 咗一個 sprint,佢下一個 real gap,唔一定係 catalog 裡面下一個 authored sprint。

最 lazy 嘅答案會係:

"Let the model invent the next sprint: title, rubric, review criteria, everything."

我唔信呢個做法。

所以 system 一定要再 strict 啲。

而家 reviewer 仍然可以喺 authored path 正確嗰陣 recommend canonical next sprint。當 known foundation gap 出現時,佢可以 assign 一個 adaptive detour,即係一個 temporary sprint,幫 learner 補返基礎,之後再返去 main path。至於當 next real gap 喺 authored catalog 以外,system 就會 request 一個 composed sprint。

喺 blog 入面寫,呢件事好似唔大。

但喺 real product 入面,呢件事一啲都唔細。

因為 composed sprint 唔係單純嘅 "dynamic text"。

佢要 create placeholder sprint、persist assignment、保留返去 canonical roadmap 嘅 return path、fill 好個 sprint packet,然後俾 system 其餘部分將呢個 sprint 當成 real product state 去處理。

令件事仲可以 trustworthy 嘅 constraint,其實好簡單:

model 唔可以自己 invent 個 standard。

Composed sprint 只係 fill packet:title、summary、context、assignment、example。

Submission schema 同 review rubric 仍然係嚟自 authored template sprint。

呢個係今次 build 入面我學到最大嘅 lessons 之一。

如果你想一個 dynamic system 仍然 keep 得住可信度,contract 就要 fixed,而 model 只可以喺入面 operate。

呢度亦都係 Codex 幫到我好多嘅地方。唔係嗰種 flashy 嘅 "睇下佢 generate 咗乜"。而係更實際嗰種:migrations、router logic、placeholder lifecycle、request state、integration tests,同埋所有令 dynamic product 冇咁假嘅 boring contract work。

我都要承認,自己曾經短暫被 lazy version 引誘過。俾個 model 自己 clever 啲。俾個 system 睇落 magical 啲。之後先慢慢執。但當你諗到自己有朝一日要向 paying user 解釋點解佢收到一個 bad sprint assignment,個念頭就會即刻冷卻。

2. Retrieval 要變成 curricular,而唔只係 semantic

當我開始俾 system compose sprints,retrieval quality 就唔再只係 backend detail。

佢變成咗 curriculum quality。

如果個 product 要就 tooling、measurement、competitive intelligence compose sprint,佢唔可以淨係喺 knowledge base 入面撈幾個 "有少少似" 嘅 chunks,再希望個 packet 睇落 coherent。

所以 knowledge base 自己都要 smarter。

我哋由 real corpus 重新 rebuild:blog posts、course modules、transcripts、companions、templates、deep-dive resources。

之後再按 source 嘅性質去分別 chunk。

Narrative blog posts 可以 keep 大啲。

Instructional modules 需要 heading-first instructional chunks。

Templates 同 reference assets 就需要 structured chunking,保留 tables、checklists、slide references、quick-reference sections,而唔係將所有嘢 flatten 成 generic paragraphs。

跟住就輪到 tagging。

唔只係 "what is this about?",仲包括:

- 呢個係咩類型嘅 chunk

- 佢 fit 咩 topic tags

- 佢適合邊種 audience

- 佢屬於咩 difficulty level

咁樣一來,retrieval 就唔再係 "nearest vector wins",而係更接近 curricular matching。

Product 可以問:

"俾我一啲 grounded material,係for 呢個 topic、呢個 audience、呢個 level。"

呢種 system 比起淨係將 RAG bolted onto a demo,要 serious 得多。

3. Evals 要 test 個 pipeline,而唔只係個 model

我唔想因為一個 composed sprint 喺 UI 上面睇落 decent,就宣佈 victory。所以 eval system 都一定要跟住個 product 一齊長大。

一開始先有 sprint review eval harness。之後 composition 加咗 retrieval gate,一個 pass/fail check,睇 tagged retrieval 到底有冇真係比 untagged baseline 更改善 grounded material。之後再加 full end-to-end harness:compose 個 sprint packet,generate schema-valid synthetic submissions,將佢哋交俾 real reviewer,再用 grounding、audience differentiation、difficulty gradient、curriculum coherence、review quality,同 authored work comparability 去 judge。

目標係阻止 "personalized progression" 變成 fake personalization。如果個 system 想講 "this next sprint is actually for you",咁佢需要嘅唔只係一個 decent-looking packet,而係 evidence:

- 個 packet 有冇 grounded 住

- track framing 有冇真係改變個 work product

- level 2 同 level 5 有冇 materially different

- reviewer 有冇 still 做出正確嘅 pass/revise call

- 個 composed sprint 有冇仲 feel 到自己係 curriculum 入面,而唔係浮喺外面

呢個 loop 會將 real problems 曝光。Weak synthetic revise submissions 仍然太強。Audience framing 喺 tracks 之間 collapse。Measurement packets 聽落冇乜問題,但其實冇答到唔同 learners 嘅唔同 trust questions。

對我嚟講,其中一個幾 humbling 嘅 moment,係發現一樣嘢可以喺 UI 上面睇落 "pretty good",但當我問一條 stricter question,佢就即刻 fail。Packet 可以好順,但太 generic。Revise case 可以 plausible,但太 easy。某程度上,呢件事對我個 ego 其實幾有用。

System 變好,唔係因為我有一條好 prompt。而係因為個 product 可以先喺 public-to-me 嘅地方 fail,即係喺 eval harness 裡面,早過佢喺 public-to-users 嘅地方 fail。唔係一個 miracle,而係一個 controlled loop。

4. Trust surfaces 要係真

呢個又係 real products 同 demos 分開嘅地方。

如果:

- email confirmation 會 break

- Google sign-in 好 flaky

- user 會困喺 auth loop

- abuse controls 缺席

- account hardening 睇落似 afterthought

咁就冇人會 care 你個 AI layer 有幾 elegant。

對 Prova 嚟講,最後個 credibility layer 包括:

- 會將 people 帶返去 right product flow 嘅 email confirmation

- Google SSO

- optional app-based two-factor auth (TOTP MFA)

- 喺 auth 同 assessment 上面嘅 Turnstile protection

好多人唔會 brag 呢種 work,因為聽落 operational 同 boring。

我覺得呢個係 mistake。

Operational 同 boring,正正就係 products earn trust 或者 quietly lose trust 嘅地方。

5. Billing 要係 testable,而唔只係 imaginable

我愈來愈 skeptical,對住一啲 product builders 將 monetization 講到好似只係一張 Stripe screenshot 咁簡單。

Billing 好快就會變得好 real。

問題唔只係:

"Can someone technically pay?"

而係:

- trial state 入面會發生乜

- webhook state 遲到會點

- 點樣先可以安全 test,而唔喺自己 revenue surface 上面做 theater

- 點樣 verify 整個 flow,而唔 invent one-off exceptions 令 system 之後更唔可靠

Prova 而家有 real subscription flow,亦有一個 internal QA checkout path,專門俾 safer billing verification 用。聽落好似 minor,但其實唔係。當個 product 愈 serious,你就愈需要一種方法,一邊 test commercial logic,一邊唔呃自己到底邊啲已經 verified,邊啲仲未。

6. Operations 要變成 product 一部分

令我第一次真係感覺 Prova 係 real 嘅其中一件事,就係我必須將佢當成一個 different operational system,而唔係 tuck 喺現有 site 入面嘅一個 feature:separate production system、separate Supabase project、separate Vercel project、separate auth configuration、separate billing setup、separate release gates。

呢句唔 sexy,但可能偏偏最重要。

因為 products 唔係淨係喺 UI layer break。佢哋會喺 systems touch 嘅地方、喺 assumptions leak 嘅地方、喺 environments drift 嘅地方、喺有人話 "we will fix that after launch",結果個 after-launch version 永遠冇真正嚟到嘅地方 break。

我做 Prova 愈耐,就愈 respect 呢啲 boring operational sentences。

如果你想幫自己個 AI product 做一個 fast pressure test,可以問:

- 邊個 standard 係 model 再 dynamic 都要維持 fixed

- 邊種 retrieval 先可以 keep output grounded

- 邊種 eval 可以喺 user 發現之前 catch 到 fake personalization

- 邊條 auth、billing 或 state edge 會令你聽日覺得尷尬

如果以上答案仲係 fuzzy,你個 product 大概仍然比較接近 demo,多過 system。

真正重要嘅 Proof

我唔想淨係用抽象概念去講 productization,因為咁會令佢聽落比真實情況更加哲學。

真正重要嘅 proof,唔係 "我寫咗幾多 migrations?"

而係而家有乜嘢真係 work 到俾 real user 用。

喺呢個 soft-launch phase,product 已經可以 credibly 講:

- email signup、confirmation 同 password recovery work

- Google SSO work

- optional TOTP MFA work

- downloadable resources 同 signed downloads work

- sprint submission 同 AI review 喺 production work

- Stripe checkout handoff 同 billing portal access work

呢個就係 buyer-facing layer 嘅 proof。

而 underneath,repo 同 rollout work 仲有 system proof:

- 4,390 個 production knowledge-base rows 已經 publish 同 tagged,for composition retrieval

- 一個贏過 untagged baseline 嘅 tagged retrieval gate

- 一套完整 18-case composition eval suite 過咗自己個 gate

- 一條 safer internal QA checkout path,做 billing verification

我而家仲未有嘅,係 broad external proof:有一批夠 meaningful 嘅 users 會講,"this changed outcomes for me." 依家講仲係太早,而我唔想 fake。

我會列出呢啲嘢,係因為如果你只係用 launch language 去講,真係好易 underestimate 「將一個 idea 變成 product」其實要做幾多嘢。

重要嘅唔係 counts 本身。

而係 counts 代表緊乜:

- sprint system 唔再只係 decorative

- retrieval 唔再 fuzzy

- evaluation 唔再 performative

- product state 變到夠 durable,可以俾人 rely

Invisible work 仍然都係 work。

而且通常係 harder work。

呢件事點樣改變咗我個腦

我仍然覺得 AI tools matter。當然 matter。Codex 喺呢個 push 入面好 useful,因為佢俾我嘅感覺係 competent、careful、methodical。佢好適合陪我推進 engineering work,而唔帶住太多 emotional theater。至於當 work 開始變得 taste-heavy 同 creative,Claude 對我嚟講仍然 feel better。Full 30-day Codex verdict 會喺 May 2, 2026 publish,嗰篇先係最適合講 cost timeline、limits story,同埋我日常 working model 點變咗。

但 Prova 推咗我去一個更重要嘅 conclusion:

我覺得 AI 可以 accelerate implementation,但唔會移除 product boundaries、sequencing、credibility 同 operational truth 呢啲 judgment 嘅需要。That judgment is still the work.

Landing page 唔係 product。Named concept 唔係 product。Generated component definitely 唔係 product。

對我嚟講,一樣嘢開始 feel like a product,係因為 visible experience 背後嘅 invisible architecture 開始存在。當 real users 可以入嚟、pay、progress、被 block、recover、submit work,並且 trust 自己見到嘅 state,嗰一刻你已經唔係再玩 demo。你係喺度作 promises。

而我覺得,product building 真正變得 serious,就係喺 promises 呢度開始。而家我更加 respect 嗰一 part。老實講,我亦都覺得 builders 應該多啲講嗰一 part。

常見問題

Prova 係乜?

Prova 係一個 structured AI builder program,俾 marketers 同 advertising professionals 由「用 AI tools」走向「build real workflows、pilots 同 operating systems」。喺 product 層面,佢係 assessment、onboarding、sprint progression、review logic、resources、billing、auth 同 product state 一齊運作,而唔係一個 AI chat box 假裝做到晒所有嘢。

Prova 裡面嘅 composed sprint 係乜?

Composed sprint 係一個 sprint packet,會因應真實 learner gap 而 generate,當個 gap 喺 current authored catalog 以外時就會出現。最重要嘅 constraint 係 model 唔可以自己 invent 個 standard。Packet 係 dynamic,但 submission schema 同 review rubric 仍然嚟自 authored template sprint。

點解 AI product building 去到 demo 之後會更難?

因為當 real users 可以 signup、pay、由 errors recover、submit work,同埋 trust 自己見到嘅 state,個 product 就開始作出 promises。去到嗰個 point,AI layer 四周嘅 invisible systems,例如 auth、retrieval quality、evals、billing、operations,會變得比 demo 入面個 first output 有幾 impress 更重要。

如果你而家都喺用 AI build 嘢,我真係幾想知道:

邊一刻開始令你覺得自己個 project uncomfortable 咁 real,即係 demo 變成 promise 嗰個 moment?

今日就講到呢度。

Cheers, Chandler