What Agencies Actually Need From AI Is Not More Content

I keep seeing AI tools pitch agencies on content volume. But if you've ever managed real client relationships, you know the harder problem is trust: isolation, permissions, context, and not leaking one client's thinking into another's.

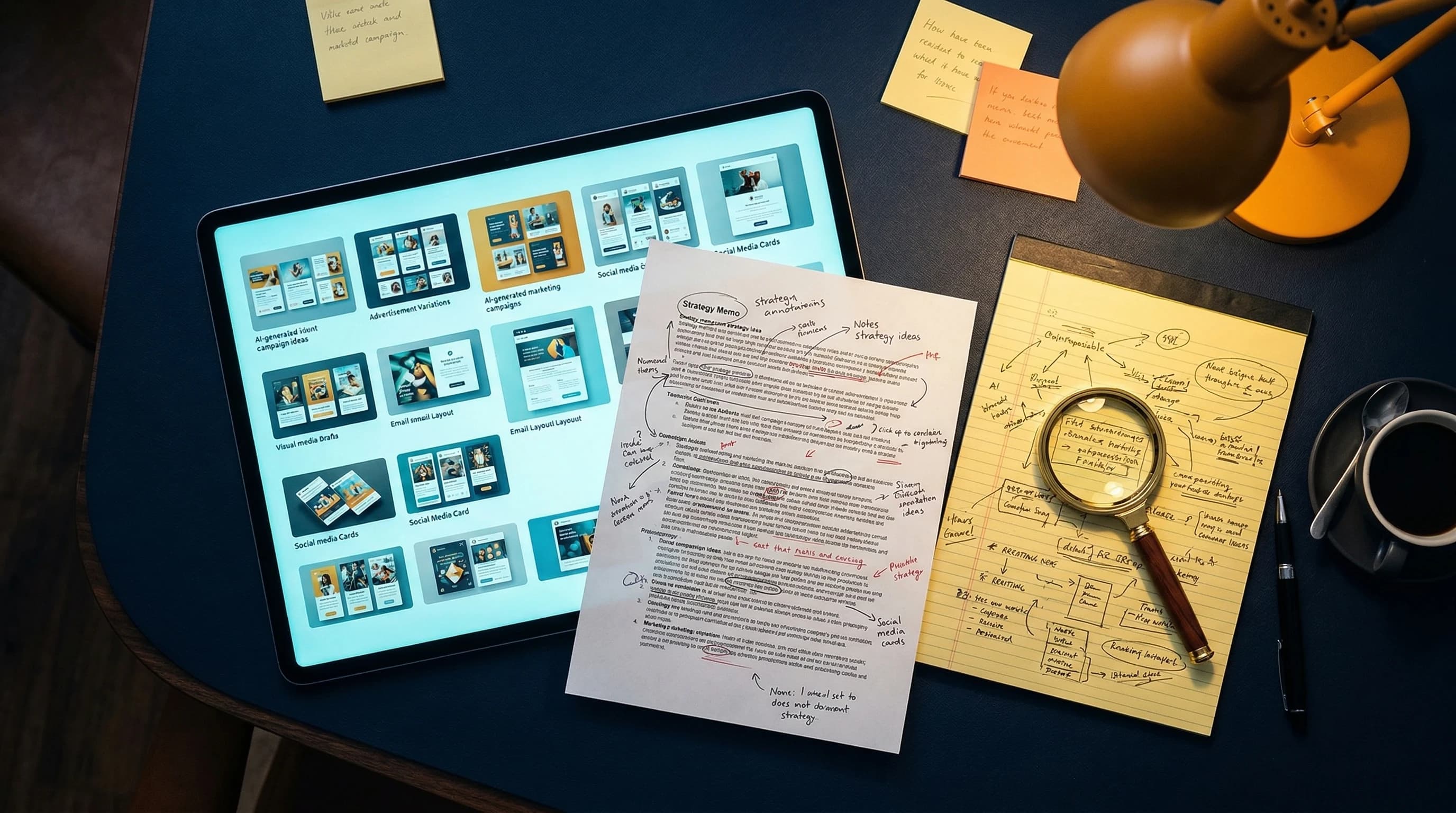

I knew I was building the wrong thing when I caught myself imagining an agency owner getting excited about "50 LinkedIn post ideas in 10 seconds."

Not because content ideas are useless. They're not.

But because if you've spent any real time around agencies, you know that is not the scary part of the job.

The scary part is trust.

It's the account manager wondering whether a system might accidentally mix Client A and Client B.

It's the strategist thinking, "Can I safely use this on a competitive account?"

It's the agency owner asking a much less sexy question than any AI homepage wants to answer:

What happens if this thing leaks one client's intelligence into another client's work?

That question matters a lot more than "how many captions can it generate?"

I think a big chunk of the AI software market still misunderstands agencies because it misunderstands the real work. Agencies do not mainly suffer from a shortage of words. They suffer from complexity:

- multiple clients

- multiple brands

- multiple internal roles

- multiple approval layers

- multiple versions of "who is allowed to see what"

So when an AI vendor shows up and says, "Great news, now you can make more content faster," part of me wants to say: have you ever actually sat inside an agency workflow?

Because the more I built, the clearer it became that agencies do not first need more content.

They need better infrastructure for trust.

The Agency Problem Is Not Volume. It's Risk

I think this is easiest to understand if you've ever had responsibility for client work with real stakes attached.

When you're handling one brand, life is simpler. Your notes are your notes. Your positioning work stays in one lane. Your mistakes are still painful, but at least they're local.

Agencies don't live there.

Agencies are juggling:

- different brand voices

- different categories

- different approval chains

- different definitions of success

- clients who may even compete with each other

That means the cost of a mistake is not "we made a mediocre draft."

Sometimes the cost is:

- we sent the wrong thing to the wrong client

- we exposed the wrong context in the wrong workspace

- we made the platform feel unsafe

- the client now wonders what else is sloppy behind the scenes

And once a client starts asking that last question, you're already in expensive territory.

This is why I get skeptical when agency AI is sold almost entirely through output examples. A demo of generated content proves the model can generate content. Fine. But for agencies, the more important proof is architectural.

Show me:

- where the data lives

- how client context is isolated

- who can access what

- whether approvals are built in

- whether one client's work can contaminate another's

That is the product story agencies actually care about, even if it is much less fun to put in a launch video.

I Think My Advertising Background Made This Obvious Faster

Maybe because I came from advertising, I never really believed the "content is the bottleneck" story for agencies.

Don't get me wrong, agencies absolutely produce content. A lot of it. And yes, there are real efficiency gains available there.

But what makes agency work hard is not usually the absence of a first draft.

It's the operating environment around the draft.

Who reviewed it? Which client is this for? What context shaped this recommendation? Was this generated with the right brand constraints? Did someone accidentally reuse the wrong assumptions? Who is allowed to approve it?

If you're in-house, some of that still matters.

If you're an agency, all of it matters every day.

That's why I made what felt like a slightly unreasonable decision early on: I started building multi-tenancy almost immediately. Day 2, basically. Which, in hindsight, was either disciplined or slightly insane depending on how charitable you're feeling.

At the time I only had one working agent. Building client isolation that early felt premature.

It also turned out to be correct.

Because once you see agencies clearly, you realize they are not just "SMEs with more users." They have a fundamentally different operating model.

The Day I Realized org_id Wasn't Going to Save Me

My first version of multi-tenancy was the classic optimistic-builder version.

Add org_id to everything.

Write your policies.

Trust the filters.

Call it a day.

This works for a surprising amount of software. It also gives you the comforting illusion that you've solved isolation when what you've really solved is basic scoping.

For my product, it was not enough.

Because agencies did not just have one organizational layer. They had clients under the organization. And each client needed its own context, outputs, history, and safety boundaries.

That meant I was trying to force two different data models into one mental shortcut:

- SME: one org, one business context

- Agency: one org, multiple client contexts under it

You can fake this for a while in application logic. People do. But the more I looked at it, the more I realized I was building a system that could look correct while still being structurally too trusting.

That's a bad combination.

It took a rebuild to get right. Separate routing. Separate schema logic. More guardrails at the database level. More explicit context handling. Less "just remember to filter correctly everywhere."

Annoying? Yes.

Worth it? Also yes. Though I'll be honest — even after the rebuild, I found another gap weeks later where users assigned to specific clients could still see data from other clients through a different query path. The architecture was better but not complete. I'm still not fully confident it's airtight.

Because agency trust should not rely on a developer remembering every if/else branch at 11:30 PM.

AI Makes the Trust Problem Worse, Not Better

This is the part I think the category still understates.

AI is not just another UI layer on top of agency work. It changes the risk profile because now you have a system that can synthesize context, not just store it.

Which is powerful.

And also exactly why bad boundaries are unacceptable.

If an AI system has access to the wrong context, it doesn't merely expose raw data. It can remix it. It can quietly let Client A's insights inform Client B's strategy. It can turn isolation mistakes into polished-looking outputs, which is honestly worse than a visible bug because it is harder to spot.

That's why general-purpose AI tools make me nervous in agency environments when people start using them casually across multiple accounts.

You can get away with that for a while.

Then one day someone notices the language sounds familiar.

Or a recommendation includes a competitor framework that should not have been in that workspace.

Or a client sees something that makes them wonder whether your systems are compartmentalized at all.

Once that doubt enters the relationship, you are not fixing a content workflow. You are repairing belief.

Good luck doing that with a few extra blog captions.

What Agencies Actually Need Instead

If I strip away all the AI theater, I think agencies need a few unglamorous things much more urgently:

1. Client-safe context isolation

Not just account switching.

Not just folders.

Not just "we take privacy seriously" copy.

Real separation — at the database level, not the application level. I mean separate schemas or separate routing, not a shared table with a client_id column and a prayer that every query filters correctly.

2. Role-aware permissions

Different people need different levels of access:

- strategist

- account manager

- approver

- client stakeholder

- admin

Without this, the tool may feel "collaborative" in a demo and chaotic in reality.

3. Approval workflows

Agencies are not solo creators flinging ideas directly into the world. There are drafts, comments, reviews, approvals, revisions, and politics. So much politics :P

If the AI system doesn't respect that, it is not respecting agency work.

4. Shared memory inside the right boundary

This one matters a lot.

Within a given client context, the system should absolutely get smarter over time. That's where AI becomes useful. But it has to compound inside the correct fence, not across all work indiscriminately.

5. Strategic intelligence before execution

Again, not anti-content. Just anti-content-first as the whole story.

Agencies need help understanding:

- what the client should say

- what the client should emphasize

- what is happening competitively

- where the strategy is weak

- how to align the team around a recommendation

That is much more valuable than producing another pile of generic deliverables.

The Architecture That Fell Out of the Problem

I didn't start with a feature list. I started with frustrations.

Agencies repeat context too often. So I invested in progressive learning before I had a single agency customer — probably prematurely, but it turned out to matter.

Client context can't be a polite suggestion. So I rebuilt the data model around real separation, not just scoped queries.

And agency work is never one person chatting with a bot in a vacuum. So collaboration and approval workflows had to be structural, not bolted on.

I'm not saying I got all of this right. I'm saying the problems forced specific architectural decisions that most general-purpose AI tools haven't had to make — or haven't chosen to make.

The Thing I Wish More AI Vendors Would Admit

Agency buyers are often evaluating two products at once:

- the product in the demo

- the future problem the product might create

That's why glossy AI output alone is not convincing. The buyer is not just asking, "Can this help us?" They're also asking, "Can this hurt us later?"

And if you can't answer that second question well, your beautiful generated output is mostly decoration.

I don't think agencies need more decoration. I think they need systems they can trust in front of clients.

That's it from me.

If you work inside an agency, I'd especially love to hear your take. Has the AI tooling you've seen actually reduced operational risk, or has it mostly just increased the amount of content your team can produce while the real headaches stay exactly where they were?

Cheers, Chandler